x-crawl

x-crawl is a flexible nodejs crawler library. Used to crawl pages, crawl interfaces, crawl files, and poll crawls. Flexible and simple to use, friendly to JS/TS developers.

If you like x-crawl, you can give x-crawl repository a star to support it, not only for its recognition, but also for Approved by the developer.

Features

- 🔥 Async/Sync – Just change the mode property to toggle async/sync crawling mode.

- ⚙️Multiple functions – Can crawl pages, crawl interfaces, crawl files and poll crawls. And it supports crawling single or multiple.

- 🖋️ Flexible writing method – A function adapts to multiple crawling configurations and obtains crawling results. The writing method is very flexible.

- ⏱️ Interval crawling – no interval/fixed interval/random interval, can effectively use/avoid high concurrent crawling.

- 🔄 Retry on failure – It can be set for all crawling requests, for a single crawling request, and for a single request to set a failed retry.

- 🚀 Priority Queue – Use priority crawling based on the priority of individual requests.

- ☁️ Crawl SPA – Batch crawl SPA (Single Page Application) to generate pre-rendered content (ie “SSR” (Server Side Rendering)).

- ⚒️ Controlling Pages – Headless browsers can submit forms, keystrokes, event actions, generate screenshots of pages, etc.

- 🧾 Capture Record – Capture and record the crawled results, and highlight them on the console.

- 🦾 TypeScript – Own types, implement complete types through generics.

Example

Take some pictures of Airbnb hawaii experience and Plus listings automatically every day as an example:

// 1.Import module ES/CJS

import xCrawl from 'x-crawl'

// 2.Create a crawler instance

const myXCrawl = xCrawl({ maxRetry: 3, intervalTime: { max: 3000, min: 2000 } })

// 3.Set the crawling task

/*

Call the startPolling API to start the polling function,

and the callback function will be called every other day

*/

myXCrawl.startPolling({ d: 1 }, async (count, stopPolling) => {

// Call crawlPage API to crawl Page

const res = await myXCrawl.crawlPage([

'https://zh.airbnb.com/s/hawaii/experiences',

'https://zh.airbnb.com/s/hawaii/plus_homes'

])

// Store the image URL

const imgUrls = []

const elSelectorMap = ['.c14whb16', '.a1stauiv']

for (const item of res) {

const { id } = item

const { page } = item.data

// Gets the URL of the page's wheel image element

const boxHandle = await page.$(elSelectorMap[id - 1])

const urls = await boxHandle.$$eval('picture img', (imgEls) => {

return imgEls.map((item) => item.src)

})

imgUrls.push(...urls)

// Close page

page.close()

}

// Call the crawlFile API to crawl pictures

myXCrawl.crawlFile({

requestConfigs: imgUrls,

fileConfig: { storeDir: './upload' }

})

})

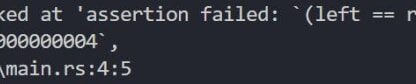

running result:

Note: Do not crawl at will, you can check the robots.txt protocol before crawling. This is just to demonstrate how to use x-crawl.

More

For more detailed documentation, please check: https://github.com/coder-hxl/x-crawl